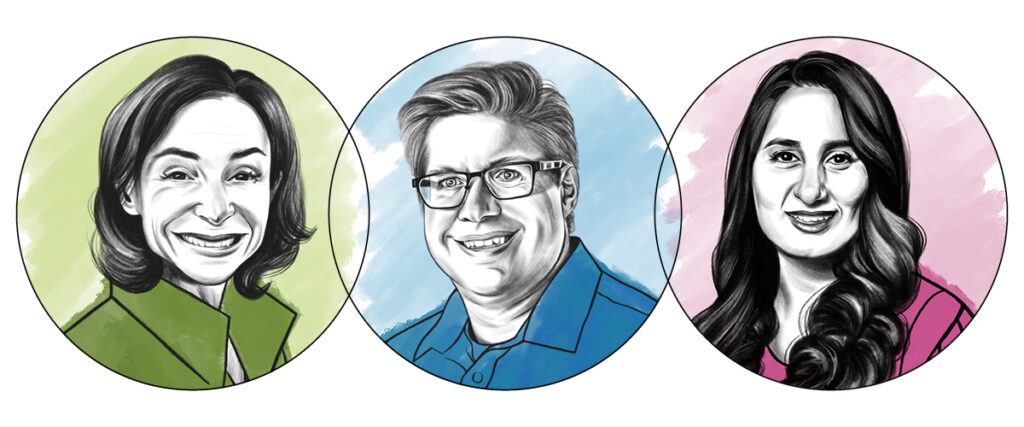

Intersection is a recurring feature in Marquette Magazine that brings together faculty members from different disciplines to share perspectives on a consequential topic. This time, with large-language models such as ChatGPT and artificial-intelligence-driven image and video generators exploding into our lives, schools and economy, three professors reflect on the change AI is making us grapple with. Following are key excerpts from a conversation with them.

What’s the most promising or exciting thing you’ve seen from the world of AI over the past year?

MZ: When I study how users interact with these technologies, there’s a lot of utility. I’m not even thinking about ChatGPT or similar platforms, but about how smart devices are becoming better at processing voice commands and predicting my needs and wants. I joke in my classes that we critique the issues and challenges posed by AI, but I rely on Apple Maps. I rely on Grammarly to autocorrect my grammar. So, there are definitely benefits. AI is helping to make a lot of these tools better for a lot of people.

NY: Generative AI is the most promising thing that I have seen. I have been using it in a lot of useful ways for my research — to address challenges that had been hard to solve. I can generate synthetic data to compensate for a lack of enough data. I can remove the biases in my data set. This is something that has amazed me in the last few years.

JSL: The way AI can handle huge sets of data has wonderful implications for our approaches to major ethical problems, such as climate change, because AI can handle calculations that an individual person can’t. Same with medicine. So on those issues AI could be really promising — especially when we make accountability and transparency priorities, so people understand, at least somewhat, what’s going on behind what AI generated for them.

What has been the most concerning development — or the AI-related challenge that most urgently needs addressing?

JSL: For professors in the humanities, what’s going on in our circles is the question of what we’re trying to teach our students to do, composing an essay, most obviously. Is that being perceived, or is it in fact, not even useful to them anymore? How can we convince our students that the creative process — which is something we’ve been given by God, the act of writing and the rigor that entails — is actually important for them? It’s like a basketball player practicing their shots. There are some things you just can’t have a machine do for you, for your own growth. (Saint-Laurent also cited concerns with the use of deep fakes by malicious actors or states, and with AI potentially worsening inequalities.)

NY: There are a few things I should mention: ethical and privacy risks, the use of generative AI for creating content — fake audio, video, images and even assignments at school — that can put people at risk. My other concern is our need to learn how to have human–AI collaboration … because it’s crucial to know what you need from AI and how to use it correctly. A final point in terms of using these algorithms is their explainability. Do their processes make sense? The algorithms are getting better and better, but the interpretability of the AI is another concern.

MZ: It’s hard to add to the points already being made. But a broader concern that I share with students is an overall kind of quantification bias that’s emerged that assumes AI or anything data-driven will be inherently correct and better than having a human make a decision or a prediction. We rely on algorithmic and AI-driven systems without having that explainability as Nasim was saying. And now that data — the things we can compute and put into a model — are what matters most, that could have an impact on things like humanness or imperfection or broader kind of humanistic qualities that we’re losing because of the reliance on these models.

How dramatically do you see AI impacting higher education? How should

Marquette prepare for that impact?

MZ: Part of me asks, why are we treating this differently than the emergence of the calculator or an online encyclopedia? Students have always been able to copy or find shortcuts for their work, but it does seem to be at a different scale now. Does that require us to change our mode of instruction or what we’re expecting of students? I suspect we expect different things in a math classroom than we did 30 years ago because memorizing the multiplication tables is just not as necessary.

Still, in our computer science classrooms, we’re struggling because there are tools out there that can write code for our coding assignments. Students can ace the assignments, but when there’s an exam and they’re forced to do it on their own, they’re suddenly struggling, realizing what they’re not learning. That puts pressure on us as instructors to help students see that difference.

JSL: It’s kind of hard to underestimate the impact. Frankly, I’m actually worried about the divisions in the faculty and the students that this might cause. This has to be an interdisciplinary endeavor for us as a university, so we are talking to one another in important ways and not bringing our own bias against other disciplines, saying, “We know this better than you do.” It’s impacting all of us, and it’s all of our responsibility.

We’re not going to have a unified voice, but we must be able to identify what our concerns are, what is in keeping with the Jesuit mission of the university,

how are those still really important questions for us all, so we’re not becoming like the Luddites over here and the techies over there. No, we have to put our students at the center and also us as professors … to really try to get the human heart back in there. We can see what AI can do. Yes, it is amazing, but it also can give us greater awe about what the human person is too, what our capacities are, and to help our students not lose sight of that.

NY: Most of the faculty are now struggling with assignments and things that are given to them by students who may be using AI tools. It’s all part of an adjustment process. It reminds me of how people were not ready to use elevators when they were first introduced; people were still using stairs. We need to get ready. As faculty, we need to learn how to use these tools and teach students how to use these tools correctly. There is now software to help us detect fakes and copied information. So, we need to get ready and define rules. Then we can probably even benefit in the classroom. AI may help us find personalized content for the students of the future based on their needs and GPA, and recommendations for custom course content. We could benefit as people did when they began using elevators.

When you think of our society’s path ahead with AI, what words come to mind? How prepared are we for our AI future?

JSL:Our society’s path ahead? That’s a big question. So, responsibility has to be very important. Transparency, accountability — humanity too. As I said before, my my main concern is that the the machine itself always be accountable to the user. That is to say that we never lose sight that behind the AI there there are humans.

NY: I just think all about ethics. Ethical rules for AI need to be defined. And also I’m thinking that as they extend algorithms, they also need to extend work on those biases and and ethics as well. They are so important, and they need to progress at the same time.

MZ: So, all the above. And one other phrase you hear a lot of people talk about in the discourse around AI right now is “existential crisis” and I don’t think that’s the thing to worry about. Just as we’ve been talking about, this isn’t a concern that AI is going to take over the world; it’s a concern about how it’s going to impact real people right now, especially vulnerable or marginalized populations,

And it’s too easy for someone like Elon Musk to say, “Oh, we need to make sure AI doesn’t become sentient and take control of things.” But that’s not at all what the actual problem is. So, I I think we’re in the same vein here — like I have cautious optimism that that we can benefit from these kinds of technologies. But instead of moving fast and breaking things, I think we need to slow down and be careful.